Install OpenCV 4.0 on Mac OS

Step 1. Install XCode

1.1. First, we need to install the latest XCode.

Grab the info and download the binary from the below Apple website:

https://developer.apple.com/xcode/

Or, you may download XCode from Apple App Store -> find the XCode app -> install XCode.

1.2. After installation completed, open XCode and accept license agreement.

Step 2. Install Homebrew

2.1. Install the Mac community package manager, Homebrew.

$ ruby -e "$(curl -fsSL https://raw.githubusercontent.com/Homebrew/install/master/install)"

Then, update the Homebrew definitions:

$ brew update

2.2. Add Homebrew to PATH

In order to make our work simple, don’t forget to add Homebrew to our working PATH at .bash_profile file.

$ echo "# Homebrew" >> ~/.bash_profile $ echo "export PATH=/usr/local/bin:$PATH" >> ~/.bash_profile

Step 3. Install OpenCV prerequisites using Homebrew

3.1. Install Python 3.6

$ brew install python3

Verify whether the Python installation OK or not by typing the below command:

$ which python3 /usr/local/bin/python3 $ python3 Python 3.6.5 (default, Jun 17 2018, 12:13:06) [GCC 4.2.1 Compatible Apple LLVM 9.1.0 (clang-902.0.39.2)] on darwin Type "help", "copyright", "credits" or "license" for more information. >>> >>>exit()

3.2. Install CMake and QT (optional)

(This code is only executed if you need CMake and QT for your development)

If you need to work the OpenCV project with CMake and QT, you can execute the below code. If you only need Python, skip this procedure.

$ brew install cmake $ brew install qt5

Later in this installation, we need to specify the QT path to a variable:

$ QT5PATH=/usr/local/Cellar/qt/5.12.2

*make sure the above path is available on your environment. Check the availability by making sure the path (ls -l /usr/local/Cellar)

Step 4: Install Python dependencies for OpenCV 4

We will install the Python dependencies for OpenCV 4 in this procedure.

$ sudo -H pip3 install -U pip numpy

Now the pip is installed, next, we can install virtualenv and virtualenvwrapper, two tools for managing virtual environments. Python virtual environments are a best practice for Python development and recommended to take full advantage of them.

$ sudo -H python3 -m pip install virtualenv virtualenvwrapper $ VIRTUALENVWRAPPER_PYTHON=/usr/local/bin/python3 $ echo "VIRTUALENVWRAPPER_PYTHON=/usr/local/bin/python3" >> ~/.bash_profile $ echo "# Virtual Environment Wrapper" >> ~/.bash_profile $ echo "source /usr/local/bin/virtualenvwrapper.sh" >> ~/.bash_profile $ source /usr/local/bin/virtualenvwrapper.sh

The virtualenvwrapper tool provides various of terminal commands:

-mkvirtualenv : Used to “make a virtual environment”

-rmvirtualenv : Destroys a virtual environment

-workon : Activates a virtual environment

-deactivate : Deactivates the current virtual environment

-Refer to this link for more information.

Now, let’s create a Python virtual environment for OpenCV.

In this command, the virtual environment for Python 3 and OpenCV4 will be defined as py3cv4. You may take your own virtual environment name as you wish.

$ mkvirtualenv py3cv4 -p python3

The command result may look like this:

Running virtualenv with interpreter /usr/local/bin/python3 Using base prefix '/usr/local/Cellar/python/3.6.5_1/Frameworks/Python.framework/Versions/3.6' New python executable in /Users/admin/.virtualenvs/cv/bin/python3.6 Also creating executable in /Users/admin/.virtualenvs/cv/bin/python Installing setuptools, pip, wheel... done. virtualenvwrapper.user_scripts creating /Users/admin/.virtualenvs/cv/bin/predeactivate virtualenvwrapper.user_scripts creating /Users/admin/.virtualenvs/cv/bin/postdeactivate virtualenvwrapper.user_scripts creating /Users/admin/.virtualenvs/cv/bin/preactivate virtualenvwrapper.user_scripts creating /Users/admin/.virtualenvs/cv/bin/postactivate virtualenvwrapper.user_scripts creating /Users/admin/.virtualenvs/cv/bin/get_env_details

Next, let’s install NumPy, CMake, and other library while we’re inside the environment.

$ pip install cmake numpy scipy matplotlib scikit-image scikit-learn ipython dlib # quit virtual environment $ deactivate

Step #5: Compile OpenCV 4 for macOS

5.1. Download OpenCV 4

Navigate to our working folder and download both opencv and opencv_contrib.

In this command, we will create opencv and opencv_contrib folder inside home folder.

$ mkdir -p ~/opencv ~/opencv_contrib $ git clone https://github.com/opencv/opencv.git $ cd opencv $ git checkout master $ cd .. $ git clone https://github.com/opencv/opencv_contrib.git $ cd opencv_contrib $ git checkout master $ cd ..

Navigate back to OpenCV repo and create & enter a build directory.

$ cd ~/opencv $ mkdir build $ cd build

Now we are ready to execute the CMake.

Make sure to use the workon command before executing the cmake command as shown below.

Notes: I am using py3cv4 as virtual environment. If you are using other name for virtual environment, you should change the below code as your own environment.

This command will take several times. (in my environment it took about 50 minutes ^^;)

$ workon py3cv4

$ cmake -D CMAKE_BUILD_TYPE=RELEASE \

-D CMAKE_INSTALL_PREFIX=/usr/local \

-D OPENCV_EXTRA_MODULES_PATH=../../opencv_contrib/modules \

-D OPENCV_PYTHON3_INSTALL_PATH=~/.virtualenvs/py3cv4/lib/python3.7/site-packages \

-D PYTHON3_LIBRARY=`python -c 'import subprocess ; import sys ; s = subprocess.check_output("python-config --configdir", shell=True).decode("utf-8").strip() ; (M, m) = sys.version_info[:2] ; print("{}/libpython{}.{}.dylib".format(s, M, m))'` \

-D PYTHON3_INCLUDE_DIR=`python -c 'import distutils.sysconfig as s; print(s.get_python_inc())'` \

-D PYTHON3_EXECUTABLE=$VIRTUAL_ENV/bin/python \

-D BUILD_opencv_python2=OFF \

-D BUILD_opencv_python3=ON \

-D INSTALL_PYTHON_EXAMPLES=ON \

-D WITH_TBB=ON \

-D WITH_V4L=ON \

-D INSTALL_C_EXAMPLES=OFF \

-D OPENCV_ENABLE_NONFREE=ON \

-D WITH_OPENGL=ON \

-D OPENCV_EXTRA_MODULES_PATH=../../opencv_contrib/modules \

-D BUILD_EXAMPLES=ON ..

Notes:

-OPENCV_ENABLE_NONFREE=ON

This is required for OpenCV 4 if you want access to patented algorithms for educational purposes.

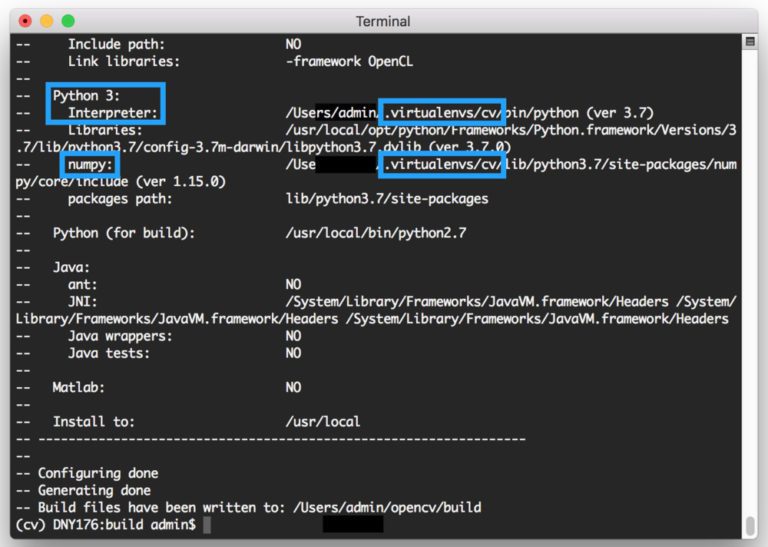

-Once CMake has finished, you will see the following information in the terminal:

*If you need QT in your project, don’t forget to add the below command.

The QT5PATH should be defined in the previous step (3.2. Install CMake and QT)

-D CMAKE_PREFIX_PATH=$QT5PATH \ -D CMAKE_MODULE_PATH="$QT5PATH"/lib/cmake \

Up to this step, if your CMake output is good to go you can kick off the compilation via:

$ make -j$(sysctl -n hw.physicalcpu) $ sudo make install

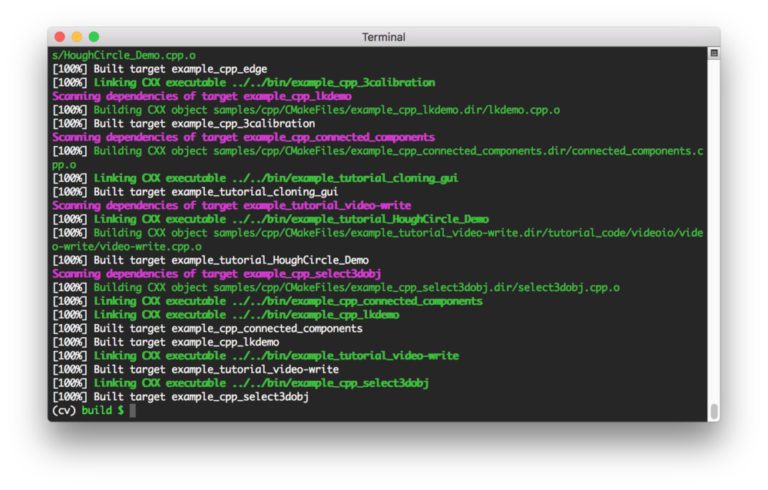

When the process is finished 100%, the screenshot should be like this:

5.2. Install imutils

$ workon py3cv4 $ pip install imutils

Step 6: Test your macOS + OpenCV 4

#Activate your Virtual Environment $ workon py3cv4 $ python >>> import cv2 >>> cv2.__version__ '4.0.0' >>> exit()

Lets Run Our First OpenCV Application!

You may clone one of my OpenCV sample from the below GitHub resource.

https://github.com/fahmifahim/opencv

#Activate your Virtual Environment $ workon py3cv4 $ git clone https://github.com/fahmifahim/opencv.git Cloning into 'opencv'... remote: Enumerating objects: 46, done. remote: Counting objects: 100% (46/46), done. remote: Compressing objects: 100% (42/42), done. remote: Total 46 (delta 2), reused 46 (delta 2), pack-reused 0 Unpacking objects: 100% (46/46), done. $ ls opencv $ cd opencv/1_experiment/1_face_recognition_adrian/ #Execute the Python program for Realtime Face Recognition: $ python recognize_video.py --detector face_detection_model \ --embedding-model openface_nn4.small2.v1.t7 \ --recognizer output/recognizer.pickle \ --le output/le.pickle #Change directory to Face Detection program $ cd ../2_face_detection_deeplearning $ python detect_faces.py --image leaders.jpg --prototxt deploy.prototxt.txt --model res10_300x300_ssd_iter_140000.caffemodel #When you finish the program, deactivate Virtual Environment $ deactivate

Reference:

Recent Comments